Software delivery is becoming continuous inference.

Agents don't just suggest code anymore. They act inside the delivery plane.

And the first thing that breaks is not reasoning quality.

The first thing that breaks is the runtime.

The failure mode (what breaks first)

When you run agents continuously, you discover a boring truth: autonomy fails like automation fails.

- It runs more often than you expected.

- It touches more surface area than you intended.

- It becomes impossible to explain under incident pressure.

Run agents across research, build, review, and routing long enough and the failure mode becomes obvious: one agent can burn a day's budget in hours if you don't bound it, and "helpful" becomes dangerous the moment it carries real permissions.

At 3am, you don't care what the model meant.

You care what it did.

The blind spot

Most teams still talk about agentic software delivery like it's a model problem:

- "Which model is best at coding?"

- "Which agent framework has the cleanest abstractions?"

That conversation is downstream.

Once agents sit inside CI, the problem becomes: how do you constrain actions, record evidence, and enforce policy at runtime?

Reasoning can't exceed governance.

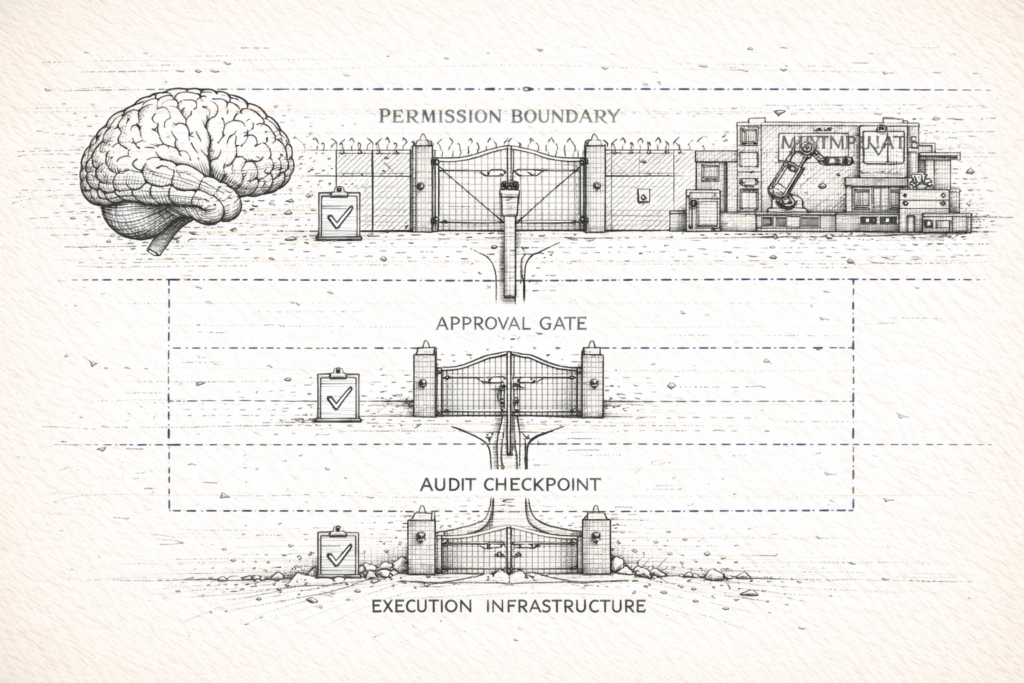

The architectural truth: separate reasoning from actuation

GitHub's recent releases are interesting because they're converging on a pattern mature systems always converge on:

Keep the brain low-privilege. Move high-privilege actions behind controlled interfaces.

1) Agents inside Actions + constrained writes

GitHub Agentic Workflows makes the placement explicit:

"GitHub Agentic Workflows let you automate repository tasks using AI agents that run within GitHub Actions."

Source: https://github.blog/changelog/2026-02-13-github-agentic-workflows-are-now-in-technical-preview/

The placement matters because Actions is already where production behavior lives.

GitHub's "safe outputs" mechanism is the more important detail. It's not a feature; it's a boundary. GitHub describes the intent plainly:

"This enables your workflow to write content that is then automatically processed… all without giving the agentic portion of the workflow any write permissions."

Source: https://github.github.com/gh-aw/reference/safe-outputs/

That's separation of duties, built into CI.

If you can't separate decisioning from execution, you don't have a governed runtime. You have a demo.

2) Agents as auditable actors

Governance isn't real until it's queryable.

GitHub's agent control plane is notable because it introduces audit semantics that treat agents as actors, not vibes:

"Each log entry includes an actor_is_agent identifier, along with user and user_id fields so you can see who the agent is acting on behalf of."

This is the core enterprise requirement: when an agent acts, you need identity, attribution, and a timeline.

Autonomy without audit is just unaccountable automation.

State is becoming default, and state expands the governance surface

Two more signals make the runtime story unavoidable.

Memory turns on by default

GitHub Copilot Memory is now enabled by default for Pro and Pro+ users. That's a shift from "stateless assistant" to "stateful system."

GitHub explicitly calls out that memories are repository-scoped, validated against the current codebase, and automatically expire after 28 days.

Those are not UX details. They are governance primitives: scope, validation, and expiry.

Repo config becomes policy distribution

Check Point's Claude Code write-up is a clean example of the emerging class of risk:

- tool configuration becomes executable behavior

- repo files become a distribution channel for hooks, tool connectors, environment variables

Even when vulnerabilities are patched, the architecture lesson remains: repos are no longer just source code. They're policy surfaces for agentic tools.

When the repo becomes policy, the repo becomes supply chain.

The cloud layer is making the same bet

The strongest validation of this thesis isn't a changelog. It's where capital and control planes are moving.

AWS and OpenAI announced a joint "Stateful Runtime Environment" on Bedrock, and AWS as the exclusive third‑party cloud distribution provider for OpenAI Frontier.

Source: https://www.aboutamazon.com/news/aws/amazon-open-ai-strategic-partnership-investment

OpenAI's Frontier announcement makes the same point in plainer terms: the bottleneck for enterprises isn't model intelligence. It's how agents are built and run.

Source: https://openai.com/index/introducing-openai-frontier/

Different vendors, same conclusion: the runtime (state + identity + tools + governance) is where production value is created.

What a governed runtime looks like (when you actually run it)

This is where most writing gets generic. Here's the concrete version.

1) Budgets are policy. Token burn is compute burn. Per-agent spend tracking catches anomalies before they compound. Daily caps, not monthly surprises.

2) Run ledgers beat opinions. Every agent action gets logged: what it read, what it changed, which tools it invoked, what it cost. When something breaks, the ledger is the difference between forensics and folklore.

3) Swim lanes reduce blast radius. No super-agents. Separate agents for build, routing, research, drafting. Each scoped to its lane. Nothing ships without sign-off.

4) Approval gates are the real human-in-the-loop. In CI, the equivalent is constrained write paths plus explicit approvals for sensitive surfaces. Autonomy can propose broadly, but it can only act narrowly.

5) Repo policy is treated like CI policy. Agent config files get CODEOWNERS, protected paths, and linting. Because they are executable governance.

The model ships fast. The runtime ships safe. Only one of those matters at 3am.

What this doesn't solve

A governed runtime doesn't make agents "correct." It makes them containable.

- It won't prevent every bad decision.

- It won't eliminate prompt injection.

- It won't replace human accountability.

What it does is make failures survivable: bounded privileges, explicit approvals, and evidence you can trust.

Takeaway

If you're adopting agents in the SDLC, stop treating governance as documentation.

Treat it as architecture.