For the last two years, the center of gravity in AI has been the model.

Bigger context. Better reasoning. Better coding. Better benchmarks.

That era is ending.

Not because models stopped improving, but because models just crossed a threshold that changes what "automation" means.

OpenAI's GPT-5.4 announcement makes the shift explicit: native computer-use capabilities, up to 1M tokens of context, and tooling improvements like tool search. That is not a feature upgrade. That is a baseline change.

Source: https://openai.com/index/introducing-gpt-5-4/

If a general model can operate a computer, the product you are building is no longer "an app that calls an LLM."

You are building a runtime.

The model is not the agent. The runtime is.

Problem

Computer-use models move agents from "answer generation" to "environment interaction."

That sounds obvious, but it is the difference between a calculator and an operating system.

Once an agent can click, type, navigate, install dependencies, and orchestrate multi-step workflows across applications, you inherit the same problems that every distributed system eventually inherits:

- state drift

- partial failure

- retries and idempotency

- permission boundaries

- auditability

- governance

Long-horizon automation is not a prompting problem. It is a systems problem.

Blind Spot

The industry's instinct is to respond with model selection.

"Use the model with 1M context."

But long context does not equal durable state.

A million tokens is a larger scratchpad, not an operating model for reliability.

Most real failures I see in autonomous systems are not "the model forgot something." They are:

- the environment changed mid-task

- a tool's behavior differed from the agent's mental model

- credentials expired

- a permission was too broad, then something got exploited

- a connector returned inconsistent results

- the agent made progress, but could not prove it to itself

In other words, production failures.

If you want agents that run for hours, you need to treat them like long-running services, not like chat sessions.

Architectural Truth: The Runtime Stack Is Emerging

Three unrelated signals from this week point to the same architectural reality.

1) Models are gaining native computer use

GPT-5.4 is positioned as a general-purpose model with "native" computer-use capabilities. It also claims up to 1M tokens of context, which matters for longer planning and verification loops.

The important part is not the benchmark numbers.

The important part is that "agent computers" are now a mainstream capability. Models will increasingly assume they can operate inside real software environments, not just talk about them.

Once that becomes normal, the scarce resource shifts.

Not intelligence.

Control.

2) Standards are hardening, because glue is failing

The MCP 2026 roadmap reads like a document written by people who hit production walls.

Source: http://blog.modelcontextprotocol.io/posts/2026-mcp-roadmap/

The priority areas are not sexy. They are operational.

- Transport evolution and scalability (stateful sessions fighting load balancers, horizontal scaling workarounds, discoverability via .well-known metadata)

- Agent communication (task lifecycle gaps like retry semantics and expiry policies)

- Governance maturation (delegation, contributor ladders, removing bottlenecks)

- Enterprise readiness (audit trails, SSO-integrated auth, gateway behavior, config portability)

This is what happens when a protocol moves from early experiments to infrastructure.

The takeaway is simple: the tool layer is turning into a real ecosystem, and ecosystems need contracts.

3) Safety is moving out of the agent process

NVIDIA's OpenShell pitch is valuable even if you ignore the branding.

The core architectural idea is correct: if your safety controls live inside the same process that is doing the acting, you have a weak boundary.

Their argument is "out-of-process policy enforcement," wrapped around the agent harness, with a sandbox, a policy engine, and a privacy router.

In other words, the browser security model applied to agents.

This is exactly where the industry is headed, because the threat model changed.

A stateless chatbot has limited blast radius.

An always-on agent with persistent context, tool access, credential access, and the ability to modify its own tooling is a fundamentally different system.

What Changes When Agents Can Use Computers

When a model can operate a computer, you are no longer building a prompt and a tool list.

You are building a system that answers four hard questions.

1) What is the agent allowed to do

You need permissions that are enforceable.

Not "be careful," but real boundaries:

- filesystem scopes

- network scopes

- credential scopes

- tool allowlists

- action-level policies (destination, method, path)

Deny-by-default is not optional for long-running autonomy.

It is the only sane starting point.

2) What is the agent allowed to see

Data access is the real risk surface.

If you cannot answer where context flows, you do not have an agent, you have a leak.

This is why privacy routing becomes a first-class primitive. Not as a feature, but as an architectural layer.

3) How does the agent prove progress

Long-horizon workflows need verifiability.

It is not enough for an agent to claim it completed a task. It needs to leave evidence.

In distributed systems terms, you need traces.

In enterprise terms, you need audit trails.

4) How do tools scale, evolve, and stay governable

Tool ecosystems explode quickly.

The MCP roadmap's focus on transport scalability, metadata discovery, and enterprise readiness is not academic. It is the shape of the problem.

As soon as tools become remote services, you need:

- stateless scaling or explicit session models

- discoverability without a live connection

- identity and authorization that match enterprise reality

- versioning, governance, and portability

Otherwise, you will rebuild the same mess we already built with internal microservices, just with LLMs in the loop.

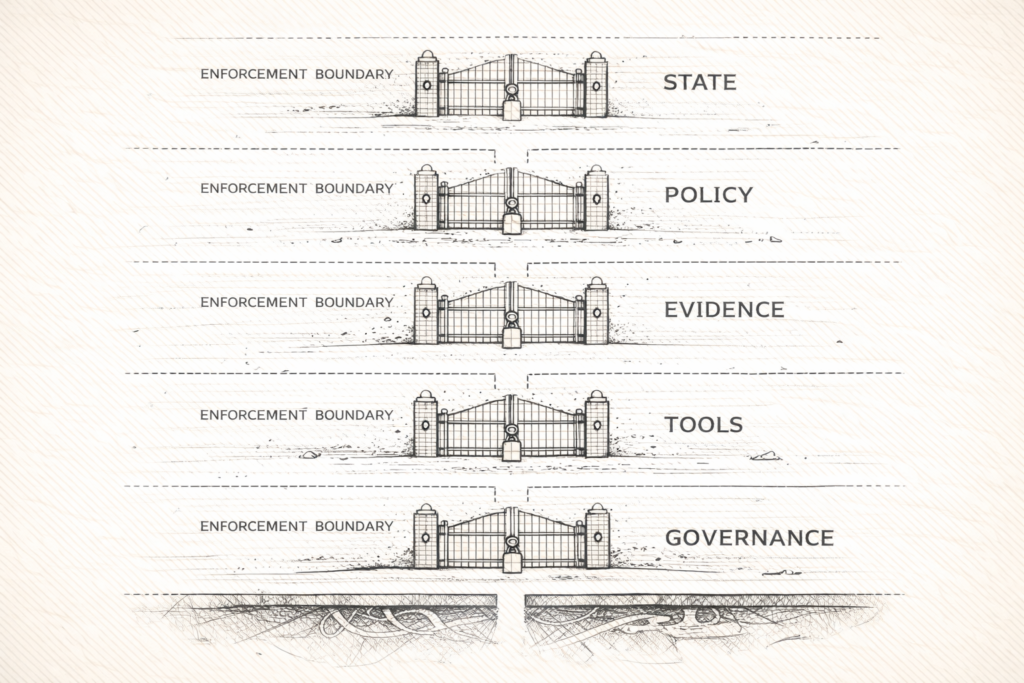

The Five Layers That Make a Runtime

A runtime stack has five layers.

1) State architecture

- explicit task state and checkpoints

- resumability across sessions

- a clear separation between short-term scratchpad and durable memory

2) Policy architecture

- deny-by-default permissions

- human approval gates for high-risk actions

- out-of-process enforcement (sandboxing, isolation)

3) Evidence architecture

- structured logs of tool calls and actions

- artifact tracking (files, diffs, tickets, PRs)

- verification steps, not just final answers

4) Tool architecture

- standard tool contracts (protocols like MCP, not one-off adapters)

- capability discovery and metadata

- retry semantics and timeouts

5) Governance architecture

- audit trails

- identity and access management (SSO where needed)

- configuration portability across environments

If you cannot diagram these five layers, you are not building an agent system.

You are building a demo.

What This Does Not Solve

Computer-use does not shrink the governance surface. It expands it.

Every new capability, computer use, persistent context, tool orchestration, is a new surface that needs boundaries, evidence, and accountability.

And computer-use adds a few constraints that bigger context windows do not magically solve:

- UI automation is brittle. Apps change, auth flows break, and "worked yesterday" becomes "stuck on a modal."

- Long-horizon runs are still dominated by cost and latency. When a 60 minute run fails at minute 58, the model was not the problem. The runtime was.

More capability does not remove governance.

It makes governance the product.

The Takeaway

GPT-5.4-style computer use pushes agents into a new category.

MCP's roadmap shows the tool layer is becoming infrastructure.

OpenShell-like approaches show the safety layer is moving outside the agent.

That is the runtime stack forming in real time.

If you are still thinking "model choice" is the main decision, you are late.

The winner is not the team with the smartest model.

It is the team with the most trustworthy runtime.

That is architecture.